LLMs:《Rethinking open source generative AI open-washing and the EU AI Act》翻译与解读

导读:这篇文章阐述了开放源码生成式人工智能(generative AI)模型的一个新方式,并提出了一个开放度评估框架。

背景:去年众多语言模型开始声称自己是开放源码的,但实际情况如何呢?

>> 越来越多声称"开源"的大型语言模型和生成式AI系统出现,但它们的开放程度令人质疑。

>> 即将生效的欧盟AI法案将赋予"开源"系统某些特权和豁免,这使得明确定义"开源"AI系统变得更加迫切。

>> 一些提供商采用"开源外包"的做法,即只公开模型权重,其他部分如训练数据保密,从而逃避科学和法律审查。

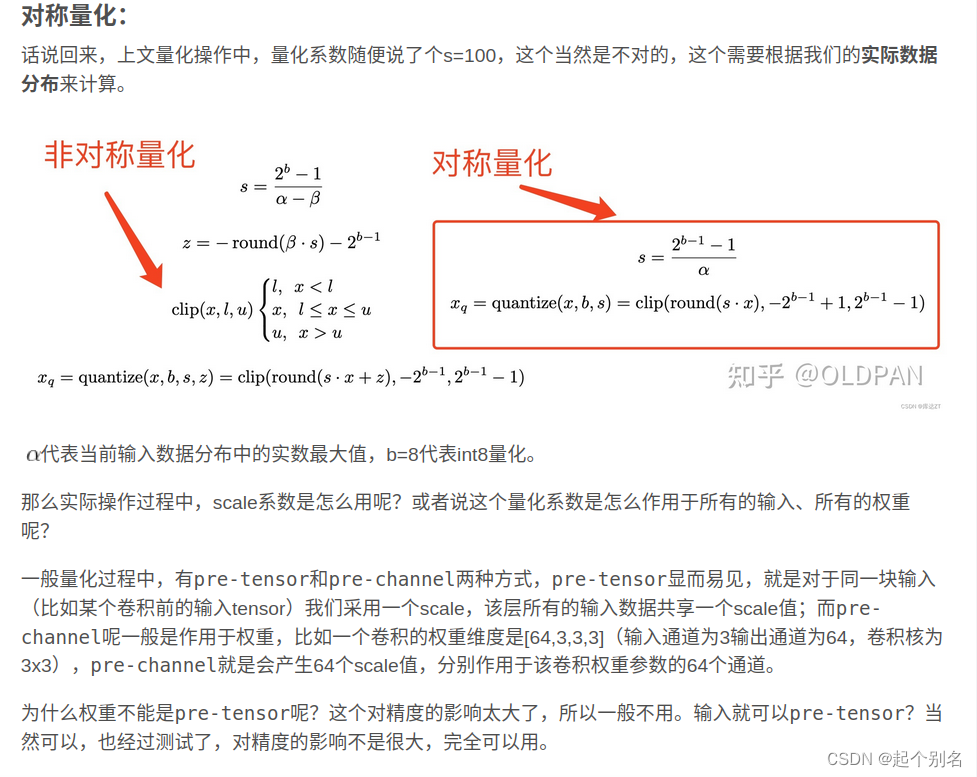

解决方案:将开放度视为一个综合体(由多个维度组成)和一个程度问题(不是二值性)。

>> 提出一种基于证据的评估框架,将开放性视为一个由多个维度组成的复合体,每个维度都有不同的开放程度。

>> 确定了14个评估开放性的关键维度,包括源代码、训练数据、模型权重、文档、许可证等。

>> 针对40个大型语言模型和6个文本到图像模型,运用该框架进行系统评估。

思路与方法:每个维度给出3个级别:开放/部分开放/关闭;每个模型每个维度给出证据依;据的判断;将判断结果汇总得出开放程度评分;

>> 辨别开源AI系统的多个组成部分,而非简单地将其视为二元开放或封闭。

>> 对每个组成部分,根据其开放程度进行细致梳理和打分,形成综合开放性评估。

>> 公开发布该框架和评估过程,接受社区审查,并鼓励开源贡献。

优势:可以识别出 pretend open源与真正开放源码的区别;为法规制定提供参考,明确开源定义;帮助用户选择模型;提高模型提供者的责任心;

>> 有助于避免"开源外包"等开源洗白行为,防止滥用"开源"概念。

>> 为即将出台的欧盟AI法案提供关于"充分详细摘要"的实践建议。

>> 可让模型提供商受到问责,科学家审视系统,最终用户做出明智选择。

>> 推动AI系统透明度,促进负责任的创新,维护真正的开源文化。

展望:

>> 未来需要关注数据集开放程度;

>> 考虑模型特殊性,为不同类型模型定制框架;

>> 将结果长期更新,引导AI开发 healthier方向;

总的来说,该论文提出了一种综合、循序渐进的开放性评估框架,旨在厘清当前AI系统的真正开放程度,并为未来AI治理提供借鉴。

目录

《Rethinking open source generative AI open-washing and the EU AI Act》翻译与解读

Abstract

4 Conclusion

《Rethinking open source generative AI open-washing and the EU AI Act》翻译与解读

| 地址 | 论文地址:https://dl.acm.org/doi/pdf/10.1145/3630106.3659005 |

| 时间 | 2024 年6月5日 |

| 作者 | Centre for Language Studies, Radboud University Nijmegen, The Netherlands |

Abstract

| The past year has seen a steep rise in generative AI systems that claim to be open. But how open are they really? The question of what counts as open source in generative AI is poised to take on particular importance in light of the upcoming EU AI Act that reg-ulates open source systems differently, creating an urgent need for practical openness assessment. Here we use an evidence-based framework that distinguishes 14 dimensions of openness, from training datasets to scientific and technical documentation and from licensing to access methods. Surveying over 45 generative AI systems (both text and text-to-image), we find that while the term open source is widely used, many models are ‘open weight’ at best and many providers seek to evade scientific, legal and regulatory scrutiny by withholding information on training and fine-tuning data. We argue that openness in generative AI is necessarily com-posite (consisting of multiple elements) and gradient (coming in degrees), and point out the risk of relying on single features like access or licensing to declare models open or not. Evidence-based openness assessment can help foster a generative AI landscape in which models can be effectively regulated, model providers can be held accountable, scientists can scrutinise generative AI, and end users can make informed decisions. | 过去一年中,声称开放的生成型人工智能系统数量急剧上升。但它们真的开放吗?在即将出台的欧盟人工智能法案(EU AI Act)的背景下,什么可以被视为生成型AI的开源这一问题显得尤为重要,该法案对开源系统的规定不同,因此迫切需要实用的开放性评估。在这里,我们使用一个基于证据的框架,区分了从训练数据集到科学和技术文档,从许可到访问方法的14个开放性维度。调查了超过45个生成型AI系统(包括文本和文本到图像),我们发现虽然“开源”这一术语被广泛使用,但许多模型最多只能算是“开放权重”,并且许多提供商通过隐瞒有关训练和微调数据的信息来逃避科学、法律和监管的审查。我们认为,生成型AI的开放性必然是复合的(由多个元素组成)和梯度式的(有程度之分),并指出依赖单一特征(如访问或许可)来判定模型是否开放的风险。基于证据的开放性评估可以帮助培养一个生成型AI景观,在这个景观中,模型可以得到有效的监管,模型提供商可以被追究责任,科学家可以审查生成型AI,最终用户可以做出明智的决定。 |

4 Conclusion

| The EU AI Act is at risk of tying itself to a moving target: a licence-based definition of ‘open source AI’ that itself is evolving. A licence and its definition forms a single pressure point that will be targeted by corporate lobbies and big companies. The way to subvert this risk is to use a composite and gradient approach to openness. That makes it possible to cut through the knot of competing stakes and actors trying to influence the definition of open source AI, and to arrive at meaningful, evidence-based, multidimensional openness judgements. Such judgements can be used for individual models by potential users to make informed decisions for or against deploy-ment of a particular architecture or model. They can also be used cumulatively to derive overall openness scores, and more reduc-tively to classify systems into shades of openness or to define an openness cutoff for regulatory purposes. | 欧盟人工智能法案面临着一个风险,那就是将自己与一个不断变化的靶子——基于许可的“开源AI”定义本身也在不断发展——联系在一起。一个许可及其定义构成了一个单一的压力点,将成为企业游说团体和大公司的目标。规避这种风险的方法是使用一种复合和梯度式的开放性方法。这使得我们能够穿越相互竞争的利益和试图影响开源AI定义的各方所形成的复杂关系网,并得出有意义的、基于证据的多维开放性判断。这样的判断可以被潜在用户用来对个别模型做出是否部署特定架构或模型的明智决定。它们还可以累积使用,以得出整体的开放性评分,更精简地用来将系统分类为不同程度的开放性,或为监管目的定义一个开放性截止点。 |

| Datasets represent the area that is most lagging behind in open-ness. Despite the challenges of openly sharing all data, we think full disclosure is where a key to meaningful openness lies. Work on AI safety and reproducibility has long pointed out the crucial impor-tance of training data for understanding model performance, ensur-ing reproducibility, and assessing legal exposure [2, 25, 29, 46, 58]. Full openness is not always the solution: after all, even fully open systems can do harm and may be legally questionable. However, open is better than closed in most cases, and knowing what is open and how open it is can help everyone make better decisions. Open-ness is important for risk analysis (the public needs to know); for auditability (assessors need to know); for scientific reproducibility (scientists need to know); and for legal liability (end users need to know). | 数据集在开放性方面是最落后的领域。尽管公开分享所有数据存在挑战,但我们认为,全面披露是通向有意义的开放性的关键。AI安全和可复制性的研究长期以来一直指出,训练数据对于理解模型性能、确保可复制性和评估法律风险至关重要[2, 25, 29, 46, 58]。 全面开放并不总是解决方案:毕竟,即使是完全开放的系统也可能造成伤害,并可能在法律上存在疑问。然而,在大多数情况下,开放比封闭更好,了解哪些是开放的以及它们的开放程度可以帮助每个人做出更好的决定。开放性对于风险分析(公众需要知道)、可审计性(评估者需要知道)、科学可复制性(科学家需要知道)和法律责任(终端用户需要知道)都很重要。 |

| Our survey has offered a first glimpse at the detrimental effects of open-washing by companies looking to evade scientific, regulatory and legal scrutiny. And our framework hopefully offers the tools to counter it and to contribute to a healthy and transparent technology ecosystem in which the makers of models and systems can be held accountable, and users can make informed decisions. | 我们的调查首次揭示了公司为了逃避科学、监管和法律的审查而进行的开放性虚假宣传的有害影响。我们的框架希望能够提供工具来对抗这种行为,并有助于建立一个健康透明的技术生态系统,其中模型和系统的制作者可以被追究责任,用户可以做出明智的决定。 |